RedTech Magazine May/June 2026 champions intelligent adaptation

LONDON — Compared to radio, television, cinema, and now streaming services, get most of the attention when it comes to technological innovation. This is puzzling given the numerous advances made during the sound-only medium’s long history. This innovative spirit continues today, with radio stations now making as much — if not more — of artificial intelligence as their visually-oriented counterparts.

The sector has applied AI technology for at least 10 years, but the rate of implementation and the scale of what is achievable have increased considerably in the past year, largely due to generative AI. GenAI is a more sophisticated form that can produce text and voice material more realistically human than the product of a software program. The basis for this is the generative pretrained transformer (GPT) developed by OpenAI, which introduced the now best-known GenAI system, ChatGPT, in November of 2022.

The GPT multimodal language model, now in its fourth iteration — GPT-4 — also forms the basis of most of the currently available chatbots. Radio-specific variations are now appearing, with ENCO offering ENCO-GPT for automated transcription of speech interviews. “Radio is all about words, but it can also be represented as text,” observes Bill Bennett, media solutions and accounts manager at ENCO. “It’s a natural extension to include both GenAI, deep neural networks and machine learning (ML) for creating automated text and AI-generated transcripts.”

Artists will quite quickly find they need to protect their voice copyright to stop unauthorized cloning.

GenAI is also capable of creating original text — something broadcast consultant Quentin Howard exploited to develop an on-air link for his Boom Radio Night Ride program in February. “I wanted to see how natural I could train it to be,” he wrote in a Facebook post about his experiment. “It’s obviously very context-dependent. For example, asking it to write a ‘DJ link’ produced cliched Americanisms.” With some “iterative refinement,” the system eventually produced a 45-second link in “BBC style” back-announcing “Forever Young” by Alphaville and introducing “Dreams of the Everyday Housewife” by Glen Campbell. “It took longer to train the bot than it would have taken to write a link,” Howard concluded, “but now that it’s trained, it is returning frighteningly good links first time on other subjects.”

In his capacity as consultant engineer to Boom Radio, Howard further experimented with cloning DJ voices. “During a prolonged IRN outage, our presenters were reading the news, and the listeners responded very positively,” he explains. “That got me thinking about cloning a couple of our DJs to read the news. Our tests show this kind of voice cloning is quite effective.”

In May this year, public broadcaster RTS Radio Télévision Suisse’s radio station Couleur 3 used AI to write links, build the playlist, and clone the voices of five of its presenters for a day’s “automated” broadcasting. This last aspect employed proprietary technology developed by Ukrainian company Respeecher. The technology is used in film, TV, games and podcasting and for re-creating the voices of people who have had their larynxes removed due to cancer.

“Respeecher focuses on voice synthesis, and when it comes to it, radio relies on voices with all the related limitations: a human can speak only in the voice they have right now in the language they know,” comments Respeecher’s chief executive, Alex Serdiuk. “The technology removes this limitation, enabling the process of voiceover from different angles. We did a project for BBC Radio and converted the host’s voice into a female and a kid’s voice while preserving all the emotions.”

Synthesizing on-air talent

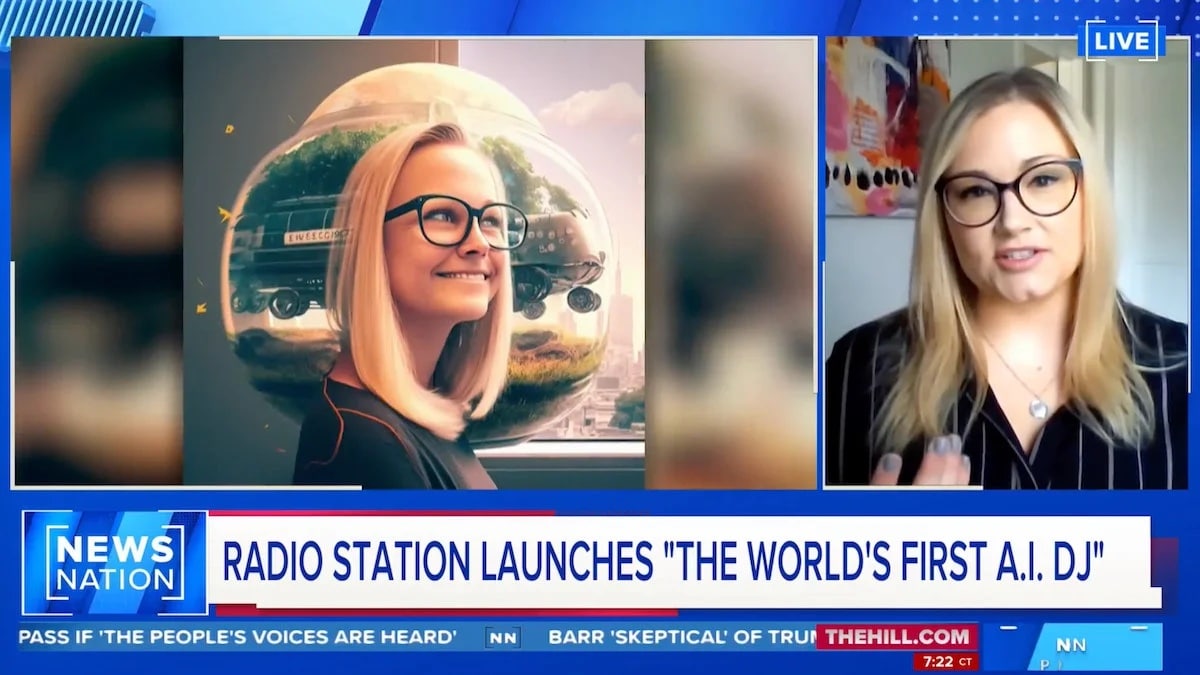

American station KBFF/Live 95.5 in Portland, Ore., is giving an AI presenter more airtime by having the station’s mid-morning host, Ashley “Z” Elzinga, share her show with a synthesized version of herself. The station created AI Ashley using RadioGPT, a new system launched this year by Futuri Media, which has been working with AI and ML in broadcasting for nearly 10 years. Zena Burns, senior vice president for content and special projects at Futuri, says RadioGPT is “more than just a homebrew of text-to-voice and ChatGPT,” describing it as “an end-to-end content solution” that combines Futuri’s Echo automation link and TopicPulse story discovery and social content system with GPT-4 and text-to-speech conversion technologies.

Burns comments that a station can use a system like RadioGPT to strengthen its lineup instead of depleting it. “While strong human talent that can create an emotional connection with listeners is — and will remain — the lifeblood of radio, RadioGPT can use AI to make the real-presenter-free parts surrounding them fresh, timely and relevant. Most AM/FM stations in North America have no more than two physically live and local dayparts, so the possibilities to pair this type of AI with human talent are incredible for radio.”

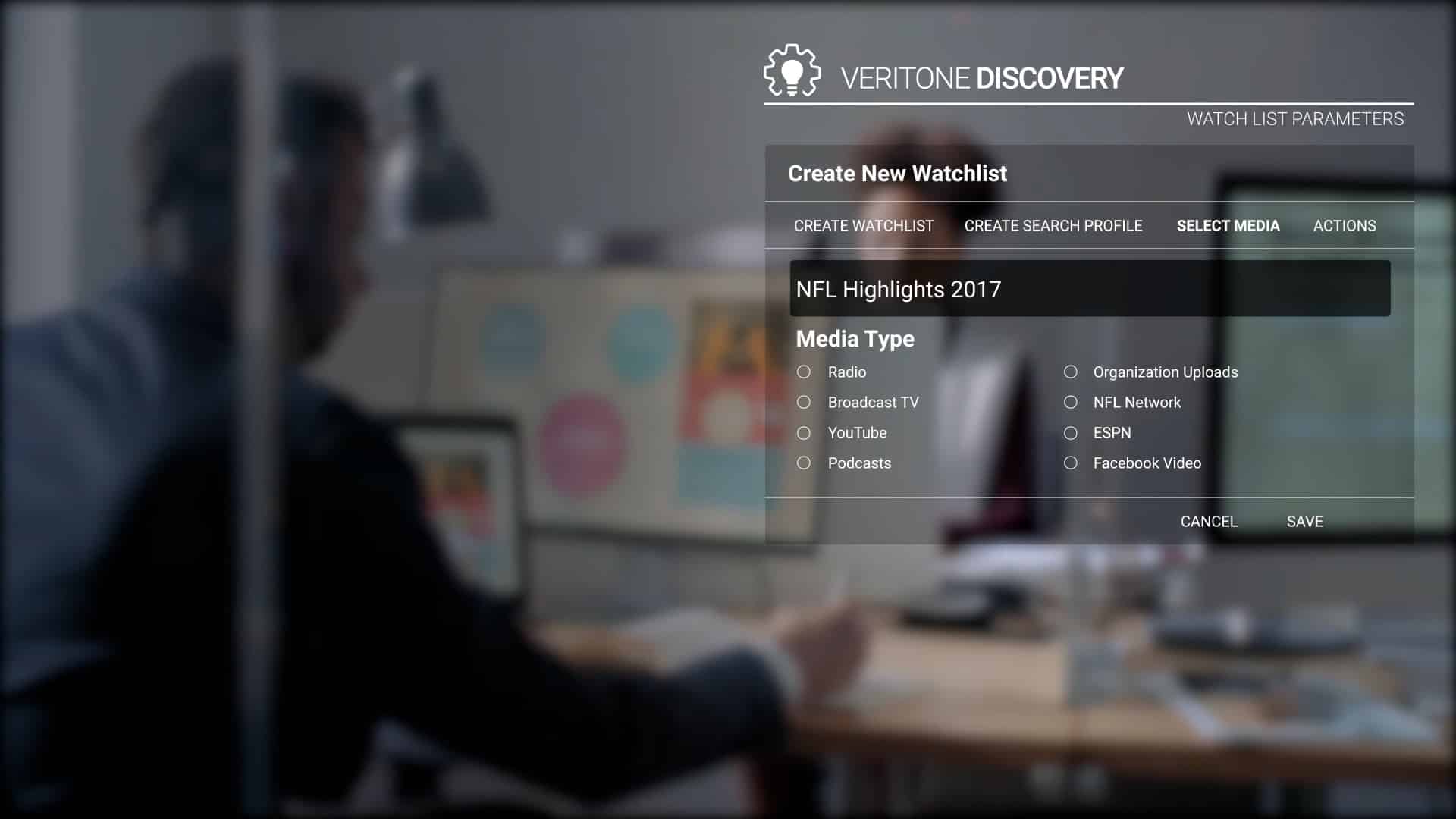

Veritone, which launched in 2014 and started implementing AI for broadcasting from its earliest days, now provides a range of services for different applications. These include synthetic voice creation and podcast management through its audio agency, Veritone One, and analyzing advertising data using its aiWARE operating system and ChatGPT, audio, biometrics and data engines.

“When you buy digital today, such as social media, you get data on what was delivered,” comments Paul Cramer, managing director of media and broadcast at Veritone. “When I’ve bought radio in the past, I just got an invoice. What we can do now is provide the same level of data insights because it would be impractical, if not impossible, for a human to listen to 24 hours of a broadcast and write down every time an integration happened on air. Also, from a content creation viewpoint, we can use GenAI to create a better listening experience by finding more relevant material for storytelling.”

Artists will quite quickly find they need to protect their voice copyright to stop unauthorized cloning.

Quentin Howard of Boom Radio

Replacing humans

There are concerns that AI could replace humans, with presenters and production staff replaced by cloned voices and automated systems. “I would like to believe that people in radio will use AI technology to make their lives easier though increased creativity and productivity, he said” More serious are the moral and rights issues surrounding voice synthesis. “Artists will quite quickly find they need to protect their voice copyright to stop unauthorized cloning,” comments Quentin Howard of Boom Radio. “Then there’s a whole minefield in cloning deceased personalities. Some stations will charge hell for leather toward AI voice, and I’m sure we’ll see generative voice stations popping up everywhere. Soon enough, there will be a catch-up race of the ethics, morals, copyright cases and regulatory oversight.”

Such a race is already underway with the creation of the Partnership on AI and its Framework on Responsible Practices for Synthetic Media. PAI supporters include BBC R&D, Adobe, CBC, Meta, Microsoft and OpenAI. Respeecher joined in February this year and has also adopted Adobe’s Coalition for Content Provenance and Authenticity standards for its Voice Marketplace AI voice bank. “All audio data will be ‘signed,’ with metadata encrypted in the audio files containing information about the tool — i.e., Respeecher — which created the synthetic sound,” explains Zena Burns. “It can help content creators protect their works and prevent misuse, such as audio deep fakes.”

There will always be those who will abuse new technologies, but if used the right way, AI has the potential to facilitate even more innovative radio in the future.

The author trained as a radio journalist and worked for BFBS Radio as a technical operator, producer and presenter before moving into magazine writing during the late 1980s. He recently returned to radio through his involvement in a RSL station near where he lives on the south coast of England.

More articles on AI

ENCO emphasizes AI and the cloud