LONDON — Several years ago, the BBC Research & Development audio team began experimenting with immersive audio and device orchestration. The team wanted to find ways to give audiences a better listening experience via devices they already had at home.

It’s widely accepted that surround sound (when sound is emanating from all around you) provides a more immersive listening experience. The researchers wanted to find a way to create that effect in the home. They knew that most audiences don’t own speakers that support spatial audio.

“It’s becoming increasingly common for listeners to have access to different types of devices that are capable of reproducing sound, including mobile phones, tablets, and laptops,” explains Emma Young, graduate R&D engineer and the development producer on this project. “Device orchestration enables us to adapt the piece and make the best use of what people have in their homes.”

Connected Devices

The first trial production “The Vostok-K Incident,” a story about the Cold War, was made in collaboration with the Universities of Surrey, Salford, and Southampton as part of the EPSRC-funded S3A project.

It involved a large interdisciplinary team, including a writer, sound designers, research scientists, and developers. They asked writer Ed Sellek to consider how to enhance the story by sending sounds to connected speakers.

“Then we worked with Eloise Whitmore and Tony Churnside from Manchester-based production company Naked Productions to record and mix the content using a novel format that is flexible enough to adapt to whatever devices are connected in the home,” said Young.

“Finally, we had to solve technical challenges related to delivering the content and synchronizing the connected devices, and put it all together with a user-friendly interface. The process we went through to create The Vostok-K Incident helped us to put tools and workflows in place for creating this type of experience.”

From the pilot, BBC R&D learned from audiences that there is an appetite for orchestrated content. “We used interaction logs to look at the time that listeners spent with The Vostok-K Incident including the number of extra devices they connected and how they used the different options on the interface,” explained Young.

Further Work

“The results suggested that there is value in this approach to delivering audio drama: 79% of respondents loved or liked using phones as speakers, and 85% would use the technology again,” he said.

“The interaction results gave helpful pointers for future experiences, suggesting that we should aim to get the greatest possible benefit out of few connected devices, ensure that content is impactful right from the start, and explore different types of user interaction. As well as this large-scale public trial, we have also performed controlled user testing and follow up interviews.”

The results encouraged the team to focus its efforts on understanding the kinds of new media experiences that producers could make and on developing a flexible and easy to use tool to make the production of orchestrated content accessible.

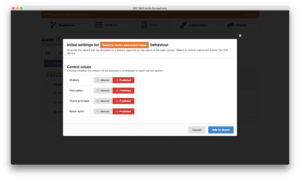

Further development work led to the creation of “Audio Orchestrator.” This tool enables users to take audio projects and rework them into interactive, 360°, spatial audio that envelops listeners in sound — from above, from behind, sending sound to one, many or all connected devices to tell a story in a new and unique way.

Audio Orchestrator

“Audio Orchestrator allows creatives to synchronize several audio devices into a connected system and take an object-based approach to production, routing parts of an audio scene to one or multiple devices to make highly immersive content,” says Young.

“It is also possible to introduce interactive elements, where audiences can make choices during the experience, for example, to steer the narrative or to switch parts of an audio scene on or off. The tool can also allow the production of experiences that sync together speakers in multiple locations, for example, enabling people in two households to experience the same content at the same time.”

Audio Orchestrator is available for anyone to use through BBC MakerBox. This is a platform for creators to access tools that enable exploration of new technologies and a community for discussion and sharing of ideas. It comes with an example project to get new users up and running, and a set of detailed documentation, with technical support available through the MakerBox forums.

In August, the BBC put these developments into practice through a production that came about because of the coronavirus.

When its international tour was cancelled because of the Covid-19 pandemic, United Kingdom-based theater company, 1927, was commissioned by “BBC Arts Culture In Quarantine” with the support of “Arts Council England” and “The Space” (an organization that helps artists and organizations reach new audiences digitally) to create an innovative immersive audio experience for BBC Radio 3.

Radio Production

Called “Decameron Nights,” this radio production is a trio of curious folk tales that combines haunting music and captivating sound effects that lends itself well to being re-imagined through orchestration. The stories originated from Italian writer Giovanni Boccaccio who produced, what is considered, the masterpiece in response to the great bubonic plague of 1348 that had profound effects on the course of European history.

“This was the first pilot produced with BBC R&D’s production tool, Audio Orchestrator,” explains Young. “While the stereo version aired for radio audiences in mid-August, listeners can also experience the orchestrated version on BBC Taster, the broadcaster’s platform for trialing new and experimental technology with the public. We’ve got more pilots in the pipeline, so watch this space!”

But what was the experience like to the theatre company, 1927? “The potential of the Audio Orchestrator struck us as huge,” states Suzanne Andrade, writer and director. “We have but dipped our toe in the water of this potential with Decameron Nights.”

Making it Work

Paul Barritt, Illustration Artwork & Sound at 1927 adds, “Working with the R&D team at the BBC on Audio Orchestrator was great fun! It’s actually quite a simple piece of software to use once you understand the basic principles and it’s very effective. I was able to get several devices working almost straight away. It was a giddy thrill indeed,” he said.

“I think the secret to using it is, not to think of it as a surround sound thing. But rather as a set of objects placed around a space that can make noises. In this sense, I think some very interesting outcomes could be developed. I found it nice to walk among the devices, for example. Possibly even walk with them. It has amazing potential, and there is a great team behind it. They are all really easy to work with and keen to experiment further with what can be achieved.”

The Future

So, the future looks exciting for this type of sound production. But what should creative sound designers bear in mind when it comes to producing such immersive sound programs?

The advice from BBC R&D is keep different sounds separate in the production process to give more control over what you can do with them. Next, think about the types of sounds that you’d like to send to different devices.

The synchronization technology is not perfect, so in some cases a small offset between devices may occur. Ambience, sound effects, and speech appears work better than say rhythmic music, which needs very accurate synchronization. The tool gives the producer an option to include a “calibration mode.” This allows the listener to correct small offsets. As a result, more types of content become feasible.

In addition, it’s it’s important to monitor the content in different ways. This will depend on the different numbers and types of connected devices one is using. It’s impossible to do this for every possible combination. You should also get an idea of how your experience might work if someone’s phone is out of sync. Or if some audio objects suddenly drop out when someone has to take a call.

Finally, the R&D team has found it helpful to think about the content and what experience the listener should enjoy. For example, how many devices do you expect them to connect? How many people will be listening? Such considerations could change the types of sound design elements you need to create, or how to route them.